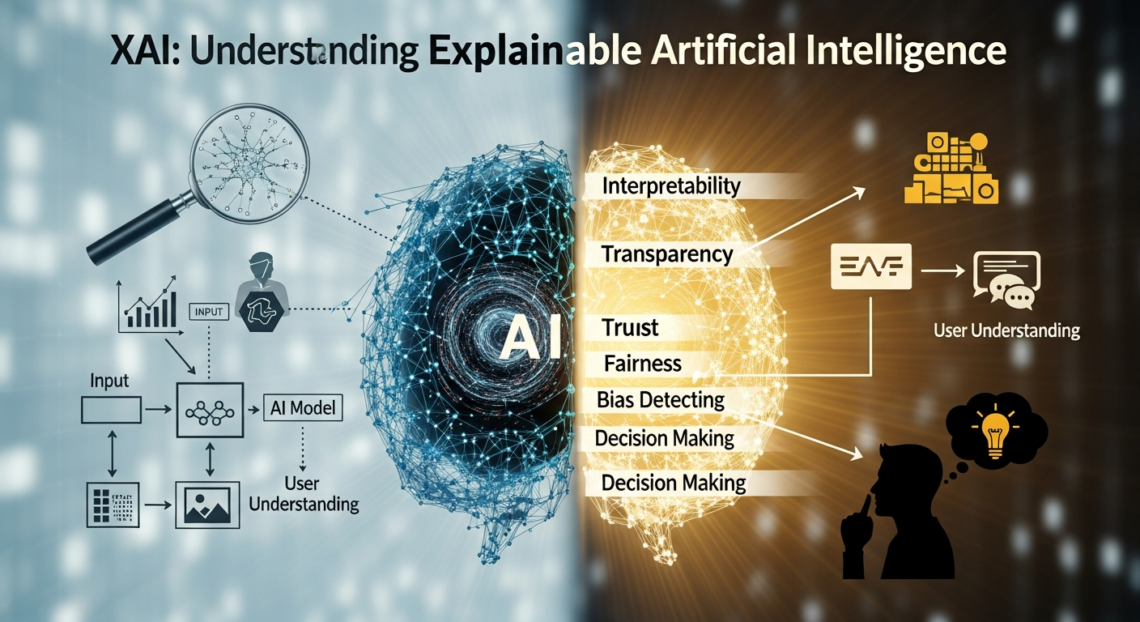

Artificial intelligence is transforming how decisions are made across industries, from healthcare and finance to marketing and transportation. However, as AI systems become more complex, they often operate as “black boxes,” making it difficult for humans to understand how outputs are produced. This lack of transparency has created trust, ethical, and regulatory concerns. Explainable Artificial Intelligence, commonly known as XAI, addresses this challenge by making AI systems more understandable and interpretable for humans.

XAI is not just a technical improvement but a critical step toward responsible and trustworthy AI adoption. As organizations increasingly rely on automated decision-making, understanding the reasoning behind those decisions becomes essential.

What Is XAI?

XAI, or Explainable Artificial Intelligence, refers to a set of methods and techniques designed to help humans understand, trust, and effectively manage artificial intelligence models. Unlike traditional AI systems that prioritize accuracy alone, XAI emphasizes transparency and interpretability alongside performance.

The goal of XAI is to explain how an AI system reaches a particular decision, prediction, or recommendation. These explanations can be provided to developers, business stakeholders, regulators, or end users, depending on the use case. By revealing the logic behind AI decisions, XAI bridges the gap between human understanding and machine intelligence.

Why XAI Matters in Modern AI Systems

The importance of XAI has grown rapidly as AI systems influence critical areas of society. When AI is used to approve loans, diagnose diseases, or assess job candidates, the consequences of incorrect or biased decisions can be severe.

XAI helps build trust by allowing users to see why a decision was made rather than blindly accepting an output. It also improves accountability, making it easier to identify errors, biases, or unfair outcomes. In regulated industries, XAI supports compliance by providing explanations that meet legal and ethical standards.

Without XAI, organizations risk deploying AI systems that are powerful but opaque, which can lead to mistrust, legal challenges, and reputational damage.

Core Concepts Behind XAI

At the heart of XAI are several foundational concepts that guide its development and implementation. Interpretability refers to how easily a human can understand the internal mechanics of an AI model. Transparency focuses on openness about how data is used and how decisions are made. Explainability combines both, offering meaningful insights into model behavior.

Another important concept in XAI is human-centered design. Explanations must be tailored to the audience, whether that audience consists of data scientists, business leaders, or everyday users. An explanation that is useful for a technical expert may not be suitable for a non-technical decision-maker.

Common Methods Used in XAI

XAI employs a variety of techniques to make AI systems more understandable. Some methods focus on simplifying models, while others add explanation layers to complex systems. Model-agnostic approaches can be applied to any AI model, regardless of its architecture, whereas model-specific methods are designed for particular algorithms.

Visualization techniques are often used in XAI to show how input features influence outputs. Feature importance analysis highlights which variables play the biggest role in a decision. Local explanations focus on individual predictions, while global explanations describe overall model behavior.

Each method has strengths and limitations, and choosing the right XAI technique depends on the context, data, and audience.

XAI in Real-World Applications

XAI is already making a significant impact across multiple industries. In healthcare, explainable AI helps doctors understand why a model recommends a certain diagnosis or treatment, supporting clinical decision-making rather than replacing it. In finance, XAI is used to justify credit scoring decisions and detect fraudulent activities.

In manufacturing, XAI improves predictive maintenance by explaining why equipment failure is likely to occur. In marketing, it helps teams understand customer behavior and optimize campaigns more effectively. These real-world applications demonstrate that XAI is not theoretical but a practical necessity for responsible AI use.

Challenges and Limitations of XAI

Despite its benefits, XAI faces several challenges. One major issue is the trade-off between accuracy and interpretability. Highly complex models like deep neural networks often deliver better performance but are harder to explain. Simplifying these models can reduce accuracy, which may not be acceptable in certain scenarios.

Another challenge is the risk of misleading explanations. Not all explanations truly reflect how a model works, and poorly designed XAI systems can create a false sense of understanding. Additionally, generating explanations can increase computational costs and development time.

These limitations highlight the need for careful evaluation and continuous improvement of XAI methods.

The Role of XAI in Ethics and Regulation

Ethics and regulation play a crucial role in the growing adoption of XAI. Governments and regulatory bodies are increasingly demanding transparency in AI systems, particularly when they affect human rights or access to essential services.

XAI supports ethical AI by promoting fairness, reducing bias, and enabling informed consent. Users have the right to understand decisions that impact them, and XAI provides the tools to meet this expectation. Regulations such as data protection laws and emerging AI governance frameworks often require explainability as a core component.

As regulations evolve, XAI will become even more central to AI compliance strategies.

Best Practices for Implementing XAI

Successful implementation of XAI requires a thoughtful approach. Organizations should define clear goals for explainability early in the AI development process. Choosing the appropriate XAI techniques based on user needs and risk levels is essential.

Testing explanations with real users helps ensure they are meaningful and actionable. Continuous monitoring is also important, as models and data change over time. Integrating XAI into the overall AI lifecycle, rather than treating it as an afterthought, leads to better outcomes.

The Future of XAI

The future of XAI is closely tied to the future of artificial intelligence itself. As AI systems become more autonomous and embedded in daily life, the demand for transparency will continue to grow. Advances in research are making it possible to explain even the most complex models more effectively.

XAI is also expected to play a key role in human-AI collaboration, where machines and humans work together rather than independently. By making AI decisions understandable, XAI empowers people to use AI as a trusted partner rather than an opaque tool.

Conclusion

XAI represents a critical evolution in artificial intelligence, shifting the focus from raw performance to understanding and trust. By making AI systems more transparent and interpretable, XAI addresses ethical, legal, and practical concerns that arise from black-box models.

As organizations increasingly rely on AI for high-stakes decisions, XAI is no longer optional. It is a foundational requirement for responsible, fair, and trustworthy artificial intelligence.

FAQs About XAI

What does XAI stand for?

XAI stands for Explainable Artificial Intelligence. It refers to methods and techniques that make AI systems easier for humans to understand and interpret.

Why is XAI important for businesses?

XAI helps businesses build trust, meet regulatory requirements, reduce risk, and improve decision-making by explaining how AI models arrive at their conclusions.

Is XAI only used in regulated industries?

No, while XAI is critical in regulated industries like healthcare and finance, it is also valuable in marketing, manufacturing, education, and many other fields.

Does XAI reduce the accuracy of AI models?

In some cases, simpler and more interpretable models may be slightly less accurate. However, many XAI techniques explain complex models without significantly reducing performance.

Will XAI become mandatory in the future?

As AI regulations continue to evolve, explainability is likely to become a standard requirement, making XAI an essential component of future AI systems.